Accurate detection and tracking of road users is essential for driverless cars and many other smart mobility applications. As no single sensor can provide the required accuracy and robustness, the output from several sensors needs to be combined. Radar and video are an especially good match, because their weaknesses and strengths complement each other.

Each kind of sensor technology (e.g. radar, video, LiDAR, ultrasound…) has its own limitations. For instance, cameras don’t work well at nighttime, or in dazzling sunlight. And radar can be confused by reflective metal objects, like rubbish bins or soda cans.

Fusing the output of these different sensors is thus very important for accurate object detection.

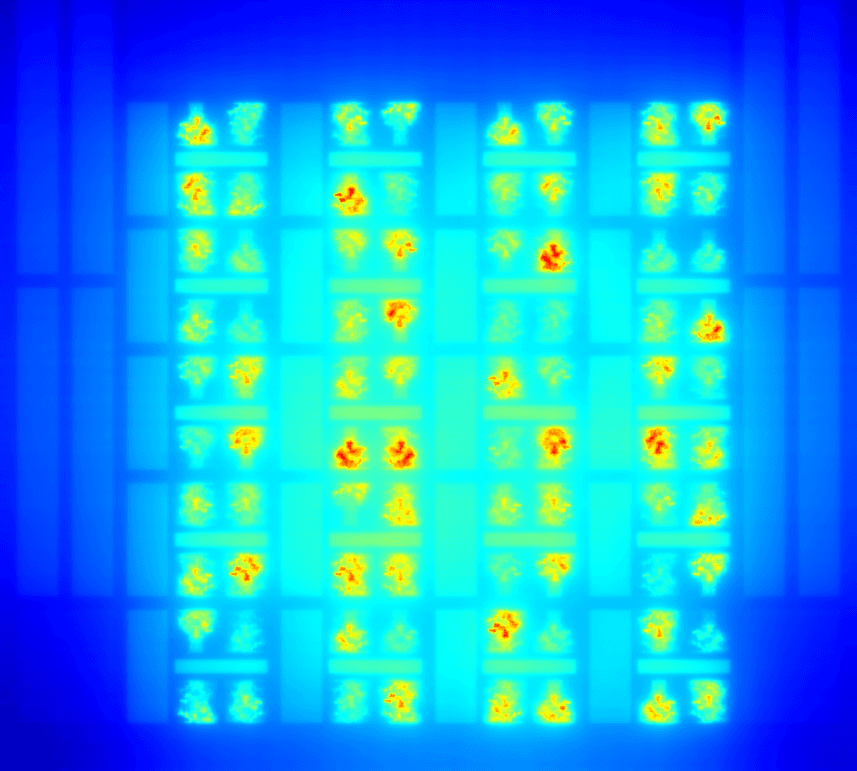

Currently, sensor fusion usually happens at a relatively late stage, after each sensor has performed object detection based on its own limited collection of sensor data. In this way, a lot of sensor-fusion potential is lost, especially in circumstances where one sensor underperforms compared to another. To mitigate this effect, researchers from IPI developed a cooperative fusion approach that adds an extra feedback loop: the processing pipelines of different sensors already exchange low or middle level information. In this way, sensors can resolve ambiguities in their own detection process, resulting in better data association at the object level and improved tracking performance.

Not only is this method much more powerful than the late object level fusion that is commonly used today, it also easier to implement, validate and homologate than the holistic approaches suggested in academic literature, which consider all information from all sensors all the time.

Though smart vehicles might be the most obvious for this technology, accurate sensor fusion is actually also important in many other areas, e.g. smart intersections, retail analytics, surveillance etc.

Want to know more?

About David van Hamme

Since 2007, David Van Hamme has been a researcher in the Image Processing and Interpretation (IPI) research group, an imec research group at Ghent University, part of the Telecommunications and Information Processing (TELIN) department. He also obtained the title of Doctor of Engineering in 2016.

In 2007 he graduated as Industrial Electronics Engineer at Hogeschool Gent. His research topics include texture segmentation, video analysis and sensor fusion for traffic-related applications and intelligent vehicles.

Published on:

13 May 2019