Francky Catthoor, imec fellow

Many emerging applications will need the computing power that was typical of supercomputers a few years ago. But they require that power to be crammed in small, unobtrusive applications that use little energy and that have a guaranteed, superfast response time. Using traditional scaling methods – scaling at the lowest levels of the hardware hierarchy as the industry has been pursuing the last decades – we can still win some, but not enough. So we’ll have to look at higher levels, developing technology that optimizes the performance and energy use of functions or applications altogether.

Called system-technology co-optimization (STCO), this approach is a huge and largely untapped territory. It will allow us to gain back the energy-efficiency that has been lost over the past decades, mainly because the industry has concentrated on the low-hanging fruit, on dimensional scaling in conventional system architectures. It is estimated that STCO might bring energy savings of several orders of magnitude for a specific performance. But because of the complexity and diversity of solutions, getting there might take us a long time.

Too much data traffic

For many of today’s applications, the biggest energy drain is the shuttling back and forth of data between the processor and the various levels of storage. That is especially so for those applications that perform operations on huge datasets, think of the results of DNA sequencing, the links in a social media network, or the results of high-definition specialty cameras. For such data-dominated applications and systems, all this data shuffling imposes an energy cost that is orders of magnitude larger than the actual processing. Moreover, it may cause serious throughput problems, slowing computation down in sometimes unpredictable ways.

One way to overcome this would be to design hybrid architectures where a number of operations are performed in the same physical location where the data are stored, without having to move the data back and forth. Examples are performing logic operations on large sparse matrices, error correction on wireless sensor data, or preprocessing raw data captured by an image sensor.

Called ‘Computation in Memory’ or CIM, this idea has been around for some time; yet, now it is ready to be taken seriously

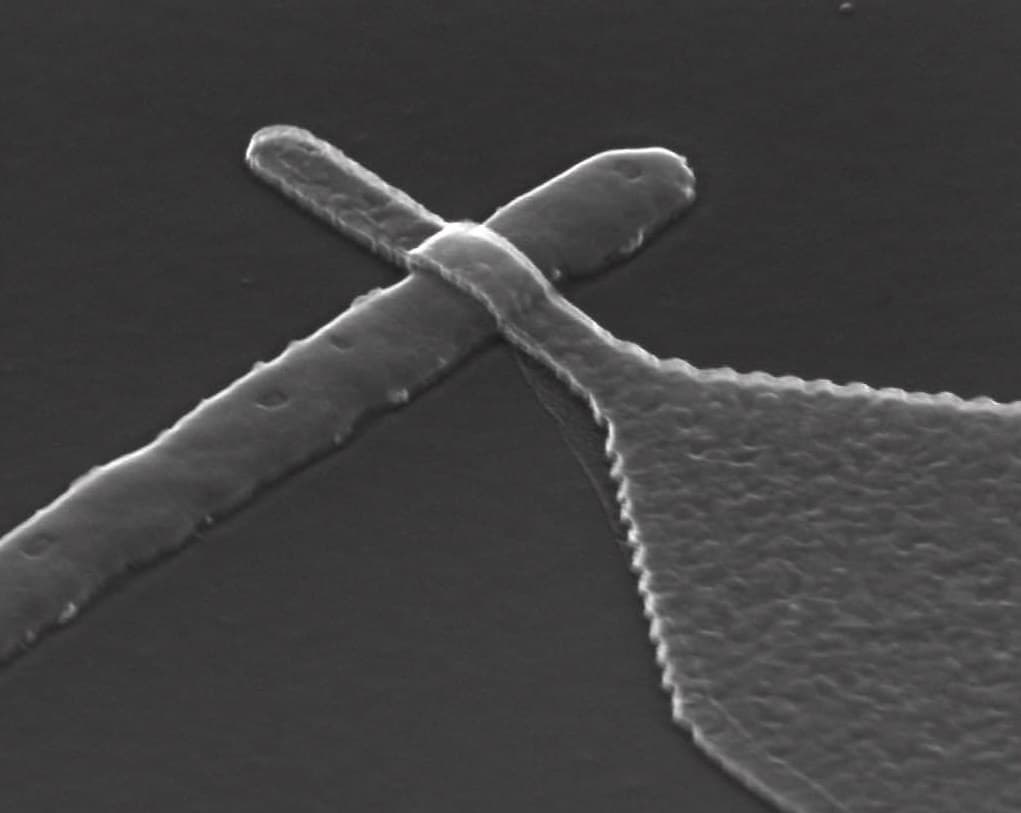

An especially enticing proposition is to make use of the physical characteristics of memory technology to do the computations, for example with resistive memory technology.

Resistive memory works on the premise that a current will change the resistance of the memory element. Using two clearly distinct current levels, resistive memory can be used as a standard digital memory. But its real power is in the fact that the resistance of the memory element can vary along a continuum and that its value can be seen as a function of a current stimulation and its older value. That property can be used to implement alternative non-Boolean computing paradigms.

Accelerators in memory

This year, and for the first time, imec presented CIM to its partners, showing amongst others a new way of classifying the various options. This fits in with imec’s ongoing effort to complement scaling at the technology level with DTCO (design-technology co-optimization) and – another level up – STCO. The concept was well received, especially from the viewpoint of equipping systems with dedicated co-processors that perform specialized tasks in-memory, the so-called computation in memory accelerators (CIMA).

Various academic groups already made proposals for CIMA architectures. These differ widely in the applications that they can address, their position in the memory hierarchy or in the way that parallelism is exploited.

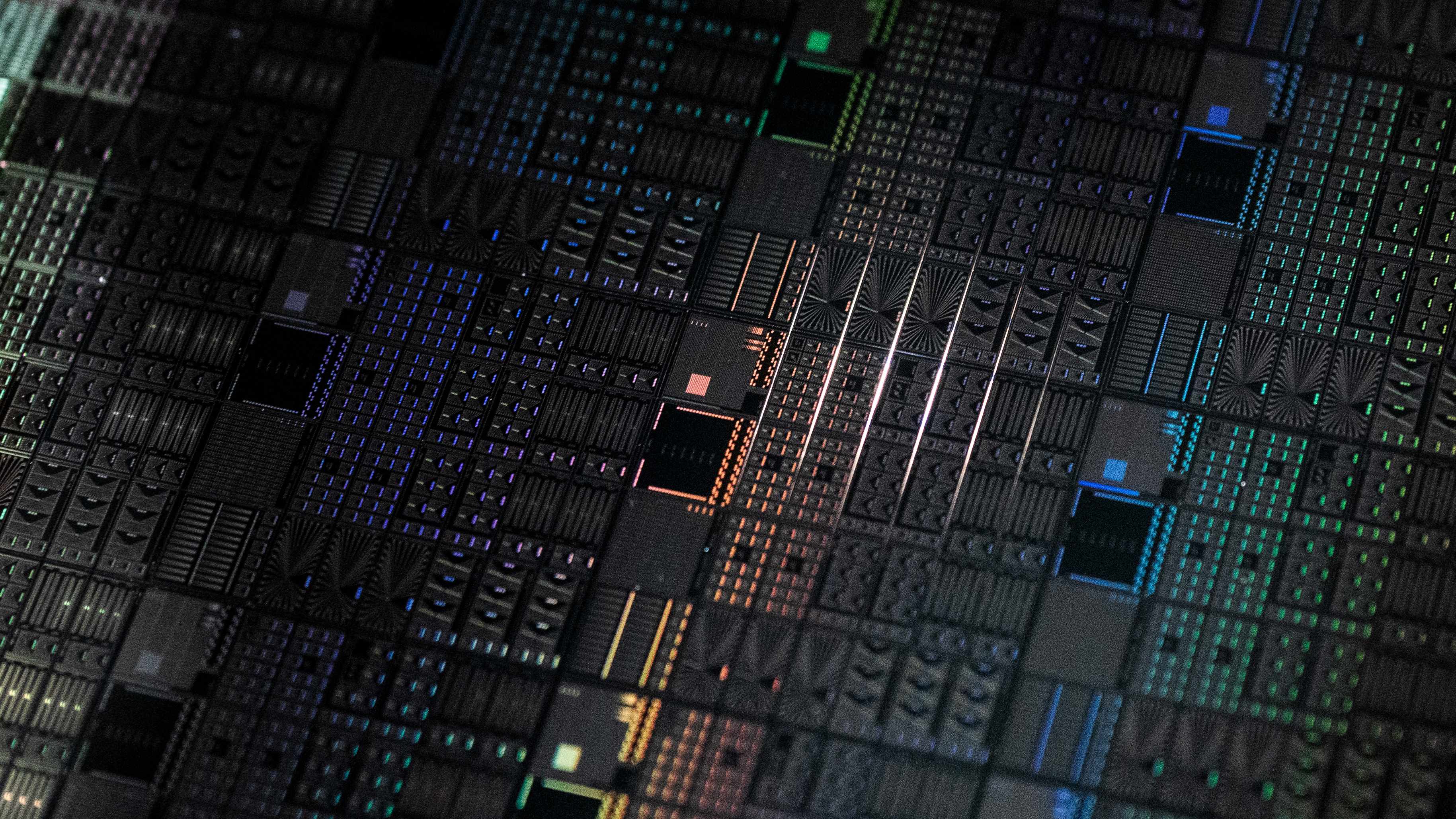

Looking e.g. at where in the memory hierarchy CIM is best performed, there are a few fundamental options. One is to do processing with a dedicated processor close to the peripheral memory, e.g. integrated in a hybrid memory cube or a high-bandwidth memory. Second, processing could be done on the memory stack, but still outside the main processor’s memory arrays. So, these results may still be shared between processors. And last, it could be done in the main processor’s memory arrays. Till now, most attention in the academic community has been going to this last option.

Another dimension would be the way how parallelism is handled. The standard technique is to parallelize on the level of tasks, with each task shuttling the data it needs between memory and computation. But if we keep the data in memory (or close to memory), we could either implement a data-level parallel solution or an instruction-level solution. In the first case, chunks of data (e.g. rows of a picture) will be processed in parallel through the same function (e.g. error correction). In the latter case, one dataset will be processed by several instructions in parallel.

Exploring technology and applications

Having presented CIM to its partners, imec will now explore the various proposals and how they could be implemented technologically in a way that fits in with the technology roadmaps and solutions of the semiconductor industry. The plan is to develop and demonstrate micro-architectures for CIMA in conjunction with attractive application cases, exploring the range of possible microarchitecture-circuit-technology options.

In that respect, in 2018, we’ll start working on MNEMOSENE (computation-in-memory architecture based on resistive devices), a European project under the Horizon 2020 program. The project’s goals are to develop and demonstrate CIM based on resistive-computing and for specific applications. This also includes e.g. designing a CIM simulator based on models of memristor devices and building blocks. MNEMOSENE is coordinated by Delft University of Technology and all the partners in this project have been doing top-research in the field for some time and form a formidable consortium that is sure to move the field forward. In addition, imec has a strong bilateral collaboration ongoing with several of these partners, especially with Delft University of Technology.

CIM fits into a new system-level concept of scaling, where we isolate functions and applications and see how we can implement a matching architecture. Computation in Memory will be one of those solutions, next to e.g. neuromorphic computing or wave-based computing. The higher the function we can use this for, going up to complete applications, the higher the potential wins can become. That way, we could envisage an accelerator for image processing, an accelerator for DNA sequence processing, or an accelerator that implements security. And these will then be plugged into systems, like lego blocks. The result will be much more heterogeneous than today’s systems.

Francky Catthoor is imec fellow and professor at the electrical engineering department of the KU Leuven. He obtained his PhD in electrical engineering in 1987 and then started work at imec, heading research efforts into high-level and system synthesis techniques and architectural methodologies. Since 2000 he is also involved in application and deep submicron technology aspects, biomedical imaging and sensor nodes, and smart photo-voltaic modules. Francky Catthoor has been associate editor for several IEEE and ACM journals, such as Transactions on VLSI Signal Processing, Transactions on Multimedia, and ACM TODAES. He was the program chair of several conferences including ISSS'97 and SIPS'01 and has been elected an IEEE fellow in 2005.

Published on:

15 December 2017