Radar and video: a particularly interesting sensor fusion match

The ability of tomorrow’s cars to detect road users and obstacles rapidly and accurately will be instrumental in reducing the number of traffic fatalities. Yet, no single sensor or perceptive system covers all needs, scenarios, and traffic/weather conditions.

Cameras, for instance, do not work well at night or in dazzling sunlight; and radar can get confused by reflective metal objects. But when combined, their respective strengths and weaknesses perfectly complement one another. Enter radar-video sensor fusion.

Sensor fusion enables the creation of an improved perceptive (3D) model of a vehicle’s surroundings, using a variety of sensory inputs. Based on that information and leveraging deep learning approaches, detected objects are classified into categories (e.g., cars, pedestrians, cyclists, buildings, sidewalks, etc.). In turn, those insights are at the basis of ADAS’ intelligent driving and anti-collision decisions.

Cooperative radar-video sensor fusion: the new kid on the block

Today’s most popular type of sensor fusion is called late fusion. It only fuses sensor data after each sensor has performed object detection and has taken its own ‘decisions’ based on its own, limited collection of data. Late fusion comes with the main drawback that every sensor throws away all the data it deems irrelevant. As such, a lot of sensor fusion potential is lost. In practice, it might even cause a car to run into an object that has remained under a single sensor’s detection threshold.

In contrast, early fusion (or low-level data fusion) combines all low-level data from every sensor in one intelligent system that sees everything. Consequently, however, it requires high amounts of computing power and massive bandwidths – including high-bandwidth links from every sensor to the system’s central processing engine.

Fig 1: Late fusion: sensor data are fused after each individual sensor has performed object detection and has drawn its own ‘conclusions’. Source: imec.

Fig 2: Early fusion builds on all low-level data from every sensor – and combines those in one intelligent system that sees everything. Source: imec.

In response to these shortcomings, researchers from IPI (an imec research group at Ghent University, Belgium) have developed the concept of cooperative radar-video sensor fusion. It features a feedback loop, with different sensors exchanging low-level or middle-level information to influence each other’s detection processing. If a car’s radar system suddenly experiences strong reflection, for instance, the threshold of the on-board cameras will automatically be adjusted to compensate for this. As such, a pedestrian that would otherwise be hard to detect will effectively be spotted – without the system becoming overly sensitive and being subject to false positives.

A 15% accuracy improvement over late fusion in challenging traffic & weather conditions

Last year, the IPI researchers showed that their cooperative sensor fusion approach outperforms the late fusion method commonly used today. On top of that, it is easier to implement than early fusion since it does not come with the same bandwidth issues and practical implementation limitations.

Evaluated on a dataset of complex traffic scenarios in a European city center, the researchers tracked pedestrians and cyclists 20% more accurately than a camera-only system. What is more, their first moment of detection proved to outperform competitive approaches by a quarter of a second.

“And over the past months, we have continued to finetune our system – improving its pedestrian detection accuracy even further, particularly in challenging traffic and weather conditions,” claims David Van Hamme.

“When applied to easy scenarios – i.e., in the daytime, without occlusions, and for not too complex scenes – our approach now comes with a 41% accuracy improvement over camera-only systems and a 3% accuracy improvement over late fusion,” he says.

“But perhaps even more important is the progress we have made in the case of bad illumination, pedestrians emanating from occluded areas, crowded scenes, etc. After all, these are the instances when pedestrian detection systems really have to prove their worth. In such difficult circumstances, the gains brought by cooperative radar-video sensor fusion are even more impressive, featuring a 15% improvement over late fusion,” Van Hamme adds.

Fig 3: Comparing the F2 scores of various pedestrian detection methods, both in easy scenarios (graph on the left) and more challenging circumstances (graph on the right). The F2 score allows to assess the systems’ accuracy objectively, with a high weight being attributed to miss rates (false negatives). In both scenarios, cooperative radar-video sensor fusion outperforms its camera-only and late fusion contenders. Source: imec.

Significant latency improvements

David Van Hamme: “And that is not all. When it comes to minimizing latency, or tracking delay, we have been making great progress as well. For example, in difficult weather and traffic conditions, we now achieve a latency of 411ms. That is more than a 40% improvement over the latency that comes with camera-only systems (725ms), and the one that comes with late fusion (696ms).”

Fig 4: Evaluated on the intersection of criteria {‘Twilight,’ ‘Nighttime’}, ‘occluded’ and ‘many vulnerable road users (VRUs),’ the latency that comes with cooperative radar-video sensor fusion has been reduced to 411ms. Source: imec

In pursuit of further breakthroughs

“We are absolute pioneers when it comes to detecting vulnerable road users using combined radar and video systems. The gains we have presented show that our approach holds great potential. We believe it may very well become a serious competitor to today’s pedestrian detection technology which typically makes use of more complex, more cumbersome, and more expensive lidar solutions,” says David Van Hamme.

“And we are looking forward to pursuing even more breakthroughs in this domain,” he adds. “Expanding our cooperative radar-video sensor fusion approach to other cases, such as vehicle detection, is one of them. Building clever systems that can deal with sudden defects or malfunctions is another topic we would like to tackle. And finally, when it comes to advancing the underlying neural networks, we want to explore some breakthroughs as well.”

“One concrete shortcoming of today’s AI engines is that they are trained to detect as many vulnerable road users as possible. But we argue that might not be the best approach to reduce the number of traffic fatalities. Is it really mandatory to detect that one pedestrian who has just finished crossing the road fifty meters in front of your car? We think not. Instead, those computational resources are better spent elsewhere. Translating that idea into a neural network that prioritizes detecting the vulnerable road users in a car’s projected trajectory is one of the research topics we would like to explore together with one or several commercial partners,” Van Hamme concludes.

Adding automatic tone mapping for automotive vision

As discussed, camera technology is a cornerstone of today’s ADAS systems. But it, too, has its shortcomings. Cameras based on visible light, for instance, perform poorly at night or in harsh weather conditions (heavy rain, snow, etc.). Moreover, regular cameras feature a limited dynamic range – which typically results in a loss of contrast in scenes with difficult lighting conditions.

Surely, some of those limitations can be offset by equipping cars with high dynamic range (HDR) cameras. But HDR cameras make signal processing more complex, as they generate high bitrate video streams that could very well overburden ADAS’ underlying AI engines.

Combining the best of both worlds, the IPI researchers from imec and Ghent University have developed automatic tone mapping – a smart computational process to translate a high bitrate HDR feed into low bitrate, low dynamic range (LDR) images without losing any information crucial to automotive perception.

Losing data does not (necessarily) mean losing information

Jan Aelterman: “Tone mapping has existed for a while, with a generic flavor being used in today’s smartphones. But applying tone mapping to the automotive use case and pedestrian detection, in particular, calls for a whole new set of considerations and trade-offs. It raises the question of which data need to be kept and which data can be thrown away without risking people’s lives. That is the unique exercise we have been going through.”

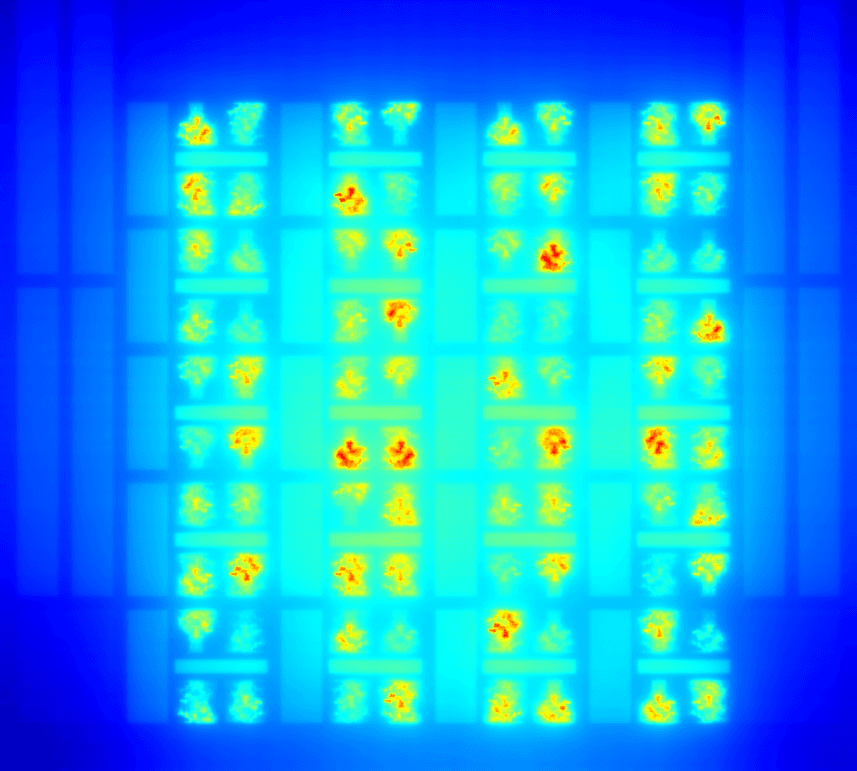

Fig 5: Evaluating the automatic tone mapping approach from the imec – Ghent University researchers (scenes on the right) against the use of existing tone mapping software (scenes on the left). Source: imec.

The result of their work is visualized in the picture above. It consists of four scenes captured by an HDR camera. The images on the left-hand side have been tone mapped using existing software, while those on the right-hand side were compiled using the researchers’ novel convolutional neural network (CNN) tone mapping approach.

“Looking at the lower left-hand side image, you will notice that the car’s headlights are showing in great detail. But, as a consequence, no other shapes can be observed,” says Jan Aelterman. “In the lower right-hand side image, however, the headlights’ details might have been lost – but a pedestrian can now be discerned.”

“This is a perfect illustration of what our automatic tone mapping approach can do. Using an automotive data set, the underlying neural network is trained to look for low-level image details that are likely to be relevant for automotive perception and throw away data deemed irrelevant. In other words: some data are lost, but we preserve all crucial information on the presence of vulnerable road users,” he adds.

“And yet another benefit of our neural-network-based approach is that various other features can be integrated as well – such as noise mitigation or debayering algorithms and ultimately even algorithms that remove artifacts caused by disturbing weather conditions (such as heavy fog or rain).”

Displaying tone-mapped imagery in a natural way

Preserving life-saving information in an image is one thing. But displaying that information in a natural way is important too. It is yet another consideration the researchers took into account.

“Generic tone-mapped images sometimes look very awkward. A pedestrian could be displayed brighter than the sun, halos could appear around cyclists, and colors could be boosted to unnatural, fluorescent levels. For an AI engine, such artifacts matter little. But human drivers, as well, should be able to make decisions based on tone-mapped imagery. For instance, when images are integrated into a car’s digital rearview camera or side mirrors,” Aelterman says.

A 10% to 30% accuracy improvement

The visual improvements brought by the researchers’ automatic tone mapping approach are apparent. But does this also translate into measurable gains?

“Absolutely,” affirms Jan Aelterman. “The number of pedestrians that remains undetected drops by 30% – when compared to using an SDR camera. And we outperform the tone mapping approaches described in the literature by 10% as well. At first sight, that might not seem like a lot, but in practice, every pedestrian that goes by undetected poses a serious risk.”

Automatic tone mapping: the road ahead

“We are currently exploring automatic tone mapping’s integration into our group’s sensor fusion research pipeline. After all, these are two perfectly complementary technologies that make for significantly more powerful pedestrian detection systems,” he concludes.

Providing vendors with a competitive edge

As a university research team, it is not up to the imec researchers to build commercial pedestrian detection systems. Instead, vendors can approach them to help solve the challenges and obstacles that come with the development of scene and video analysis, using different sensors, sensor fusion and/or neural networks. Leveraging the concepts developed and provided by the imec researchers, vendors of commercial pedestrian detection systems are thus presented with a significant competitive edge.

Interested in exploring a concrete collaboration opportunity? Get in touch with the IPI research team via Ljiljana Platisa (ljiljana.platisa@imec.be).

This article has previously been featured at FierceElectronics.

Published on:

15 October 2021