Today, AI still lives mostly inside datacenters. We interact with it at a distance, through the familiar devices of the internet era: laptops and smartphones. Although natural conversation could be the default way of using generative AI, most of us still choose to type and read rather than talk and listen.

That is, however, destined to change. Old habits die hard but are not immortal. The future of AI is to become a personal assistant woven into our daily lives. We’ll therefore need to interact with it more directly than through screens and keyboards, resulting in new demands on the underlying technologies.

From cloud-based to on-body AI

The trend toward personalized AI is already taking shape in the consumer device industry. In recent years, we’ve seen a wave of AI‑powered gadgets reach the market—glasses, pins, rings, earbuds—each experimenting with new ways for people to carry AI with them, with varying degrees of commercial success.

A wave of AI-powered gadgets has reached the market.

It seems the ‘killer product’ has not yet been invented. Still, these early explorations reveal two important takeaways.

1. The smartphone is not yet ready for retirement

At least in the short term, these AI-powered gadgets will serve as companions to our smartphones rather than replace them.

This is primarily a technical necessity: the extremely compact form factors of glasses, pins, rings, or earbuds don’t leave room for anything beyond basic on‑device processing. They rely on a larger device to handle the computational heavy lifting or to bridge the connection to more powerful cloud resources.

In theory, that companion device could be any small computing unit worn discreetly somewhere on the body, with a wireless connection to the AI gadget. But that would just add more weight, more hardware, and more friction to our daily lives.

After all, the smartphone has become too important—handling communication, payments, navigation, media, and countless other essentials—for us to abandon it anytime soon. It therefore makes more sense to extend the smartphone with AI companion capabilities, rather than introduce another device.

By extension, personal AI gadgets stand a better chance of adoption when their technologies, especially their connectivity options, are compatible with what most smartphones support.

“AI gadgets stand a better chance of adoption when their connectivity options are compatible with what most smartphones support.”

2. AI companions will listen and look, rather than speak and show

Over the past years, the meaning of the term “smart glasses” has shifted.

Not long ago, the term evoked visions of wearables that layer rich digital graphics (augmented‑reality overlays) onto the world. But while those AR glasses have yet to mature into viable mainstream products, a different category has begun to take shape. AI glasses—lightweight frames equipped with cameras and microphones but offering little or no visual output—are showing more promise as the first personal AI companions capable of reaching a wide audience.

There is again a technological reason for this: integrating high-resolution displays and the electronics that drive them into a light-weight, stylish frame remains extremely difficult. But consumer behavior matters too. Surveys show that many people show little interest in AR technology. They prefer assistants that are more discreet and less “in your face” than full AR overlays.

But we do expect our personal AI assistants to stay closely connected to our daily lives. For them to be genuinely helpful, they need continuous awareness of our context: seeing what we see, hearing what we hear, sometimes (such as smart rings) keeping up with our vital signs. Only then can they offer the right kind of support, when we actually want it.

All this leads to an asymmetrical flow of data. The wireless link from the AI companion device to its host (usually a smartphone) needs to support high‑rate, ultra‑low‑power uploads, because that’s where continuous sensing data (audio fragments, images, contextual cues) must travel. In the opposite direction, however, the data flow is lighter: the phone only needs to send occasional instructions or AI‑generated responses back to the device.

From on-body to embodied AI

Looking further ahead, many imagine a future in which our AI assistants no longer rely on our bodies to act in the physical world. Instead of living on us, they could increasingly live alongside us in the form of embodied machines capable of performing real tasks autonomously.

The field of humanoid robotics is still young but advancing quickly. One challenge that emerges across the landscape is the need for extremely precise position and environment awareness. Humanoids must navigate complex indoor layouts where signal interference and multipath propagation are common, interact with tools, grasp objects reliably, and avoid collisions—all of which require spatial accuracy far beyond what classical industrial robots need.

Low-power wireless technologies enabling edge AI

Discussions about the future of AI once revolved almost entirely around models and software. More recently, attention has shifted to the hardware that powers those models: high‑performance compute, high‑bandwidth memory, and optical interconnects inside hyperscale data centers.

Meanwhile, the role of low‑power wireless technologies once AI moves into our personal physical space remains largely unexplored. The question is: can today’s wireless systems deliver the throughput, precision, and power efficiency that edge AI will demand?

The most commercially established low-power wireless technology today is Bluetooth. It already supports both data transfer and location-tracking use cases. Since the introduction of channel sounding it achieves ~10 cm-level ranging accuracy.

Ultra-wideband (UWB), while less ubiquitous than Bluetooth, has been gaining ground quickly. It is now deployed at scale in certain smartphones, vehicles, smart home devices, and industrial real-time location systems, supported by strong adoption in both consumer electronics and automotive sectors.

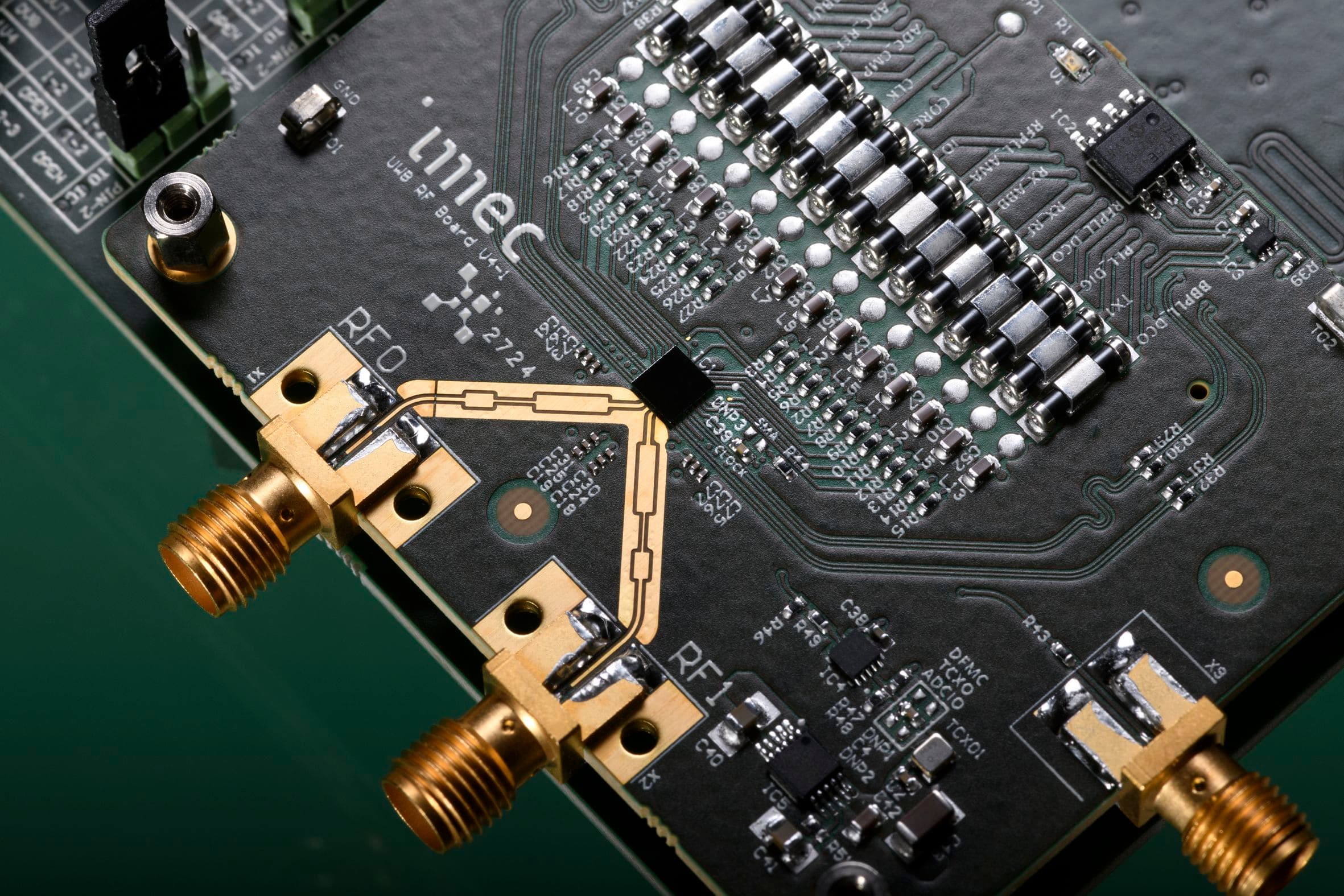

Although UWB’s power consumption is still greater than Bluetooth’s, it already offers sub-centimeter precision for spatial ranging. Ongoing algorithm and hardware innovations, including imec’s work on next-generation UWB, are further improving energy-efficiency and pushing performance toward millimeter-level accuracy. This is the level of precision that would be needed for future advanced robotics.

Imec’s Gen-4 UWB radio system.

Further advances on the horizon

Bluetooth and UWB have already come a long way, but further innovation is needed to prepare them for the short‑range data transfer and spatial‑awareness tasks that will link AI with the physical world. Two recent advances illustrate how quickly these technologies are rising to the challenge.

One example comes from imec’s latest generation of UWB technology. Its new transceiver introduces a breakthrough on the data‑communication front: it achieves a record‑high data rate of 124.8 Mb/s, the highest rate still compatible with the upcoming IEEE 802.15.4ab standard. This is about 20 times faster than the 6.8 Mb/s data rates used in today’s UWB ranging and communication profiles. This improvement stems from co‑optimization of both the analog front‑end and the digital baseband.

Crucially, the higher throughput does not come at the cost of energy efficiency. The transceiver delivers very low energy per bit, substantially lower than Wi‑Fi, especially at the transmit side. This makes it ideal for the asymmetrical data flows between AI gadgets, such as smart glasses, and their companion devices.

A second advance is the introduction of narrowband (NB)-assisted UWB operation. This approach not only unlocks more advanced ranging capabilities, quadrupling the operational distance up to 100 meters, but also uses a transceiver architecture that can later support emerging standards such as Bluetooth Higher Bands. This expansion of Bluetooth into higher-frequency bands promises higher data throughput, lower latency, and improved positioning accuracy.

Conclusion

As AI moves from distant datacenters into the intimate spaces of our daily lives, the demands on short‑range wireless technologies will only grow. Future AI companions, whether worn on our bodies or embodied in autonomous machines, will depend on radios that can move data efficiently, sense space precisely, and operate for long periods on tiny power budgets.

Bluetooth and UWB are already evolving rapidly in that direction, and the latest advances show just how quickly they are adapting to the needs of edge intelligence.

Want to explore these technologies in more detail for your application? Click the contact button below to get set up a meeting with our team.

Published on:

21 April 2026